TorchServe: Increasing inference speed while improving efficiency - deployment - PyTorch Dev Discussions

Inference mode complains about inplace at torch.mean call, but I don't use inplace · Issue #70177 · pytorch/pytorch · GitHub

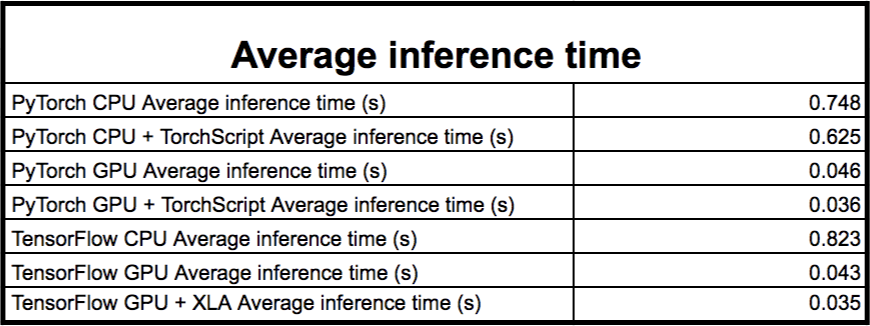

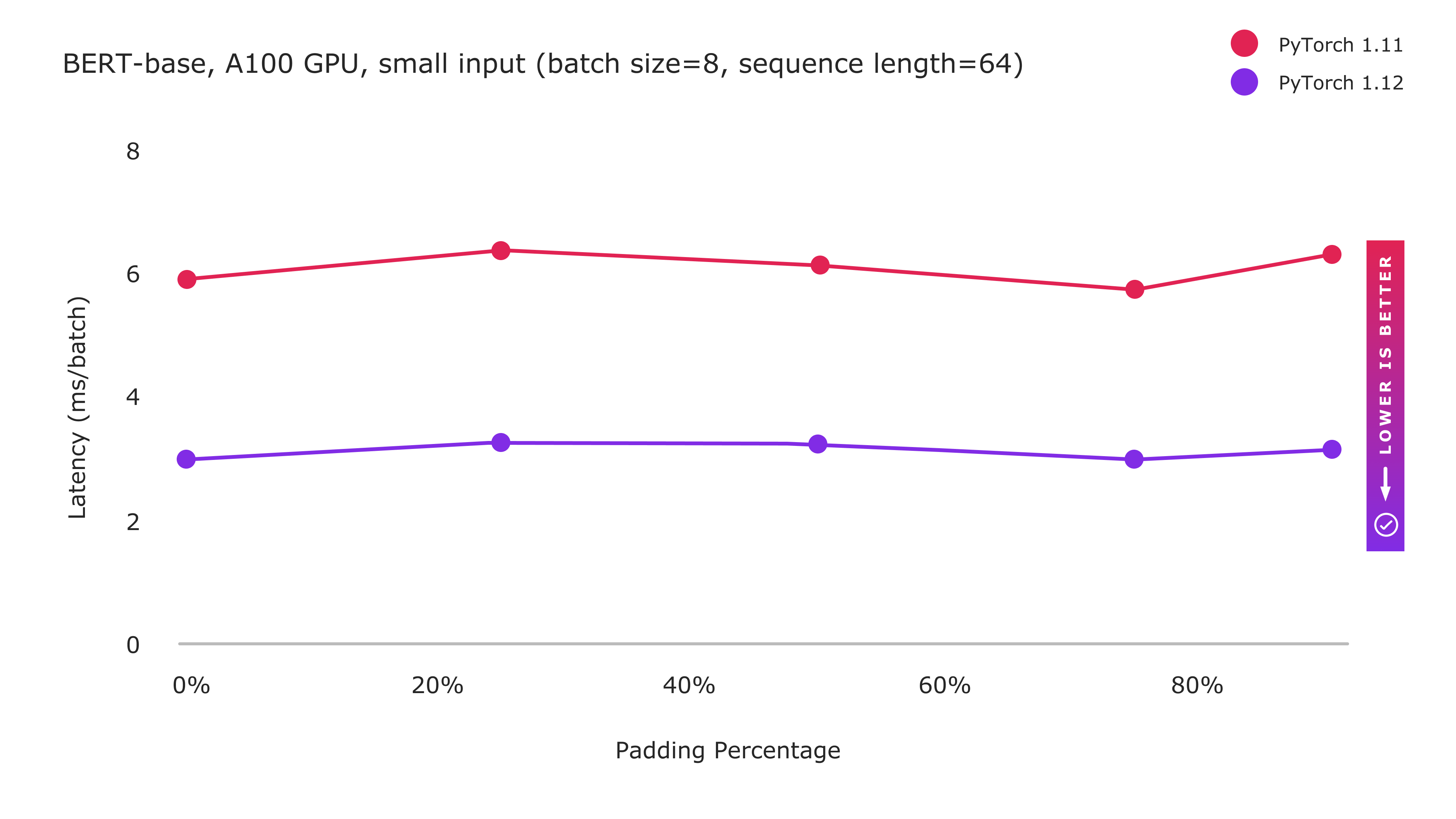

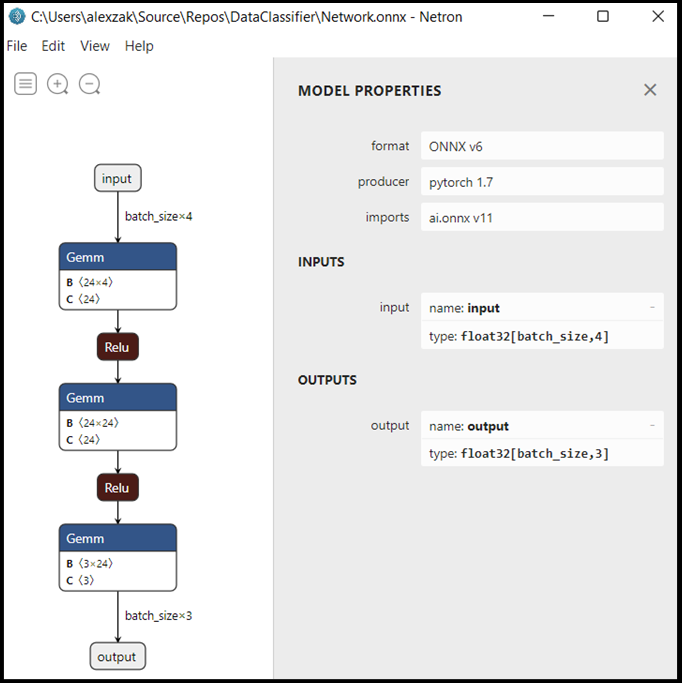

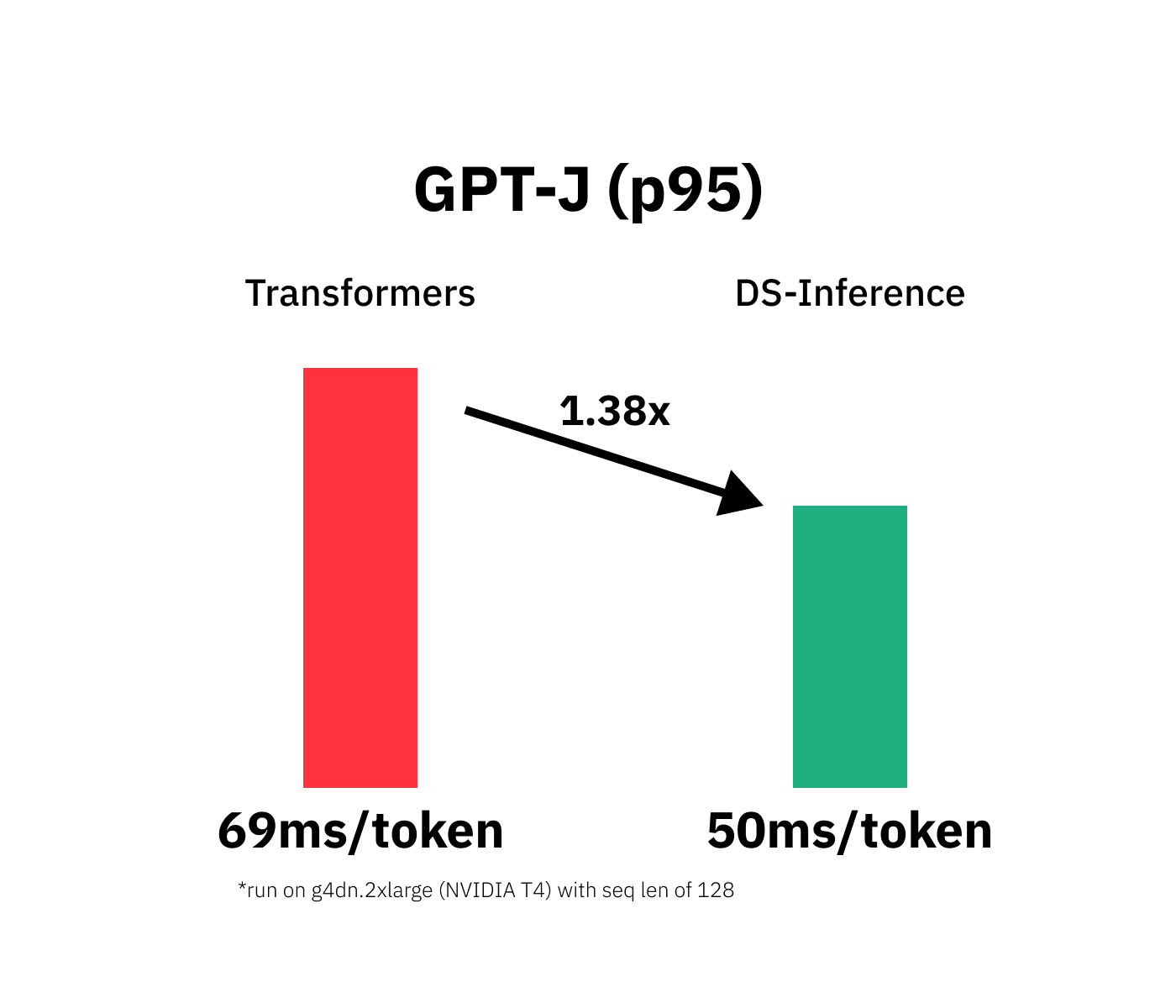

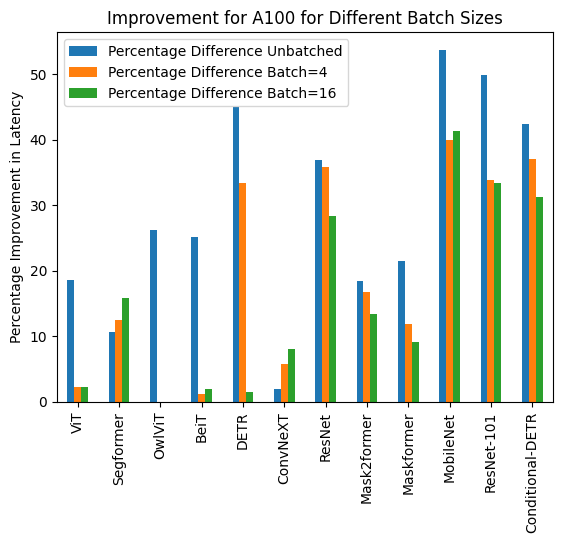

Deployment of Deep Learning models on Genesis Cloud - Deployment techniques for PyTorch models using TensorRT | Genesis Cloud Blog

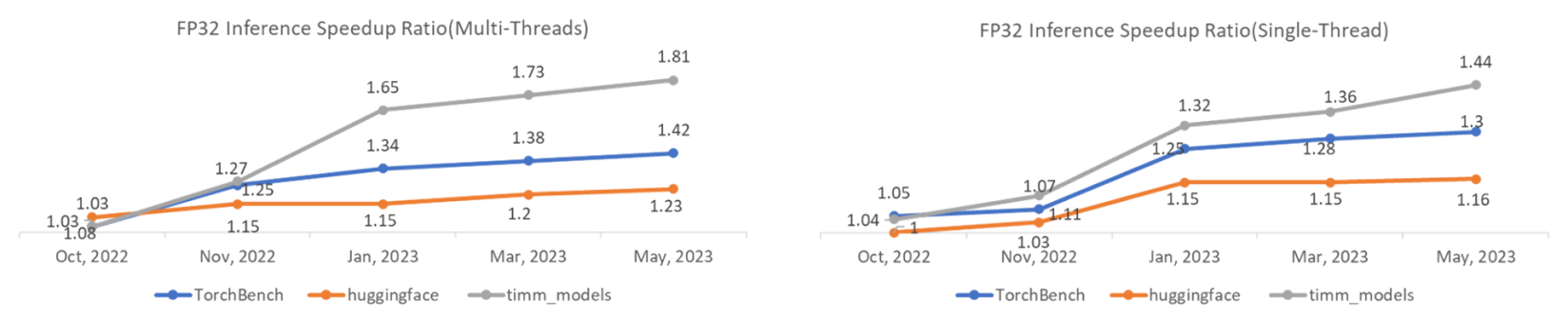

TorchDynamo Update: 1.48x geomean speedup on TorchBench CPU Inference - compiler - PyTorch Dev Discussions

Abubakar Abid on X: "3/3 Luckily, we don't have to disable these ourselves. Use PyTorch's 𝚝𝚘𝚛𝚌𝚑.𝚒𝚗𝚏𝚎𝚛𝚎𝚗𝚌𝚎_𝚖𝚘𝚍𝚎 decorator, which is a drop-in replacement for 𝚝𝚘𝚛𝚌𝚑.𝚗𝚘_𝚐𝚛𝚊𝚍 ...as long you need those tensors for anything

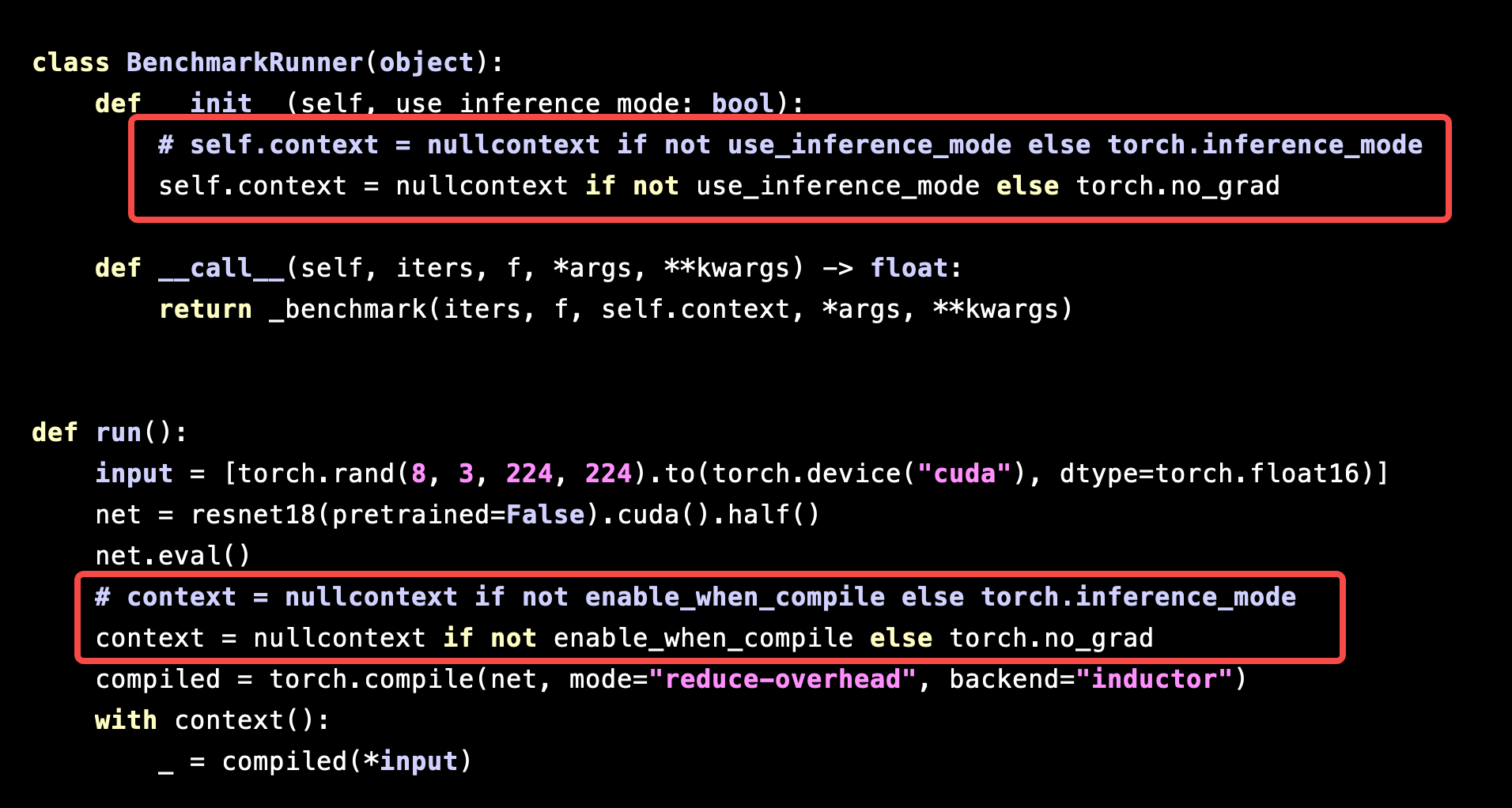

Performance of `torch.compile` is significantly slowed down under `torch.inference_mode` - torch.compile - PyTorch Forums