Lightning Talk: Adding Backends for TorchInductor: Case Study with Intel GPU - Eikan Wang, Intel - YouTube

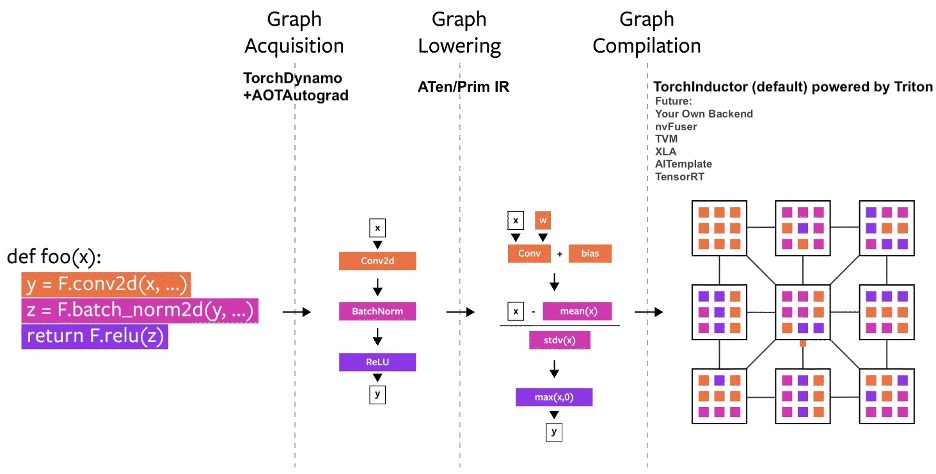

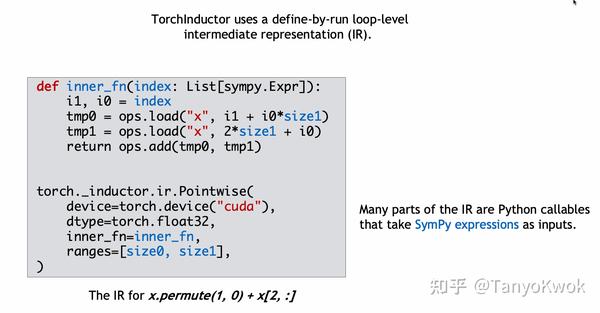

TorchInductor: a PyTorch-native Compiler with Define-by-Run IR and Symbolic Shapes - compiler - PyTorch Dev Discussions

TorchInductor: a PyTorch-native Compiler with Define-by-Run IR and Symbolic Shapes - compiler - PyTorch Dev Discussions

Torch2 CPU] torch._inductor.ir: [WARNING] Using FallbackKernel: aten.cumsum · Issue #93495 · pytorch/pytorch · GitHub

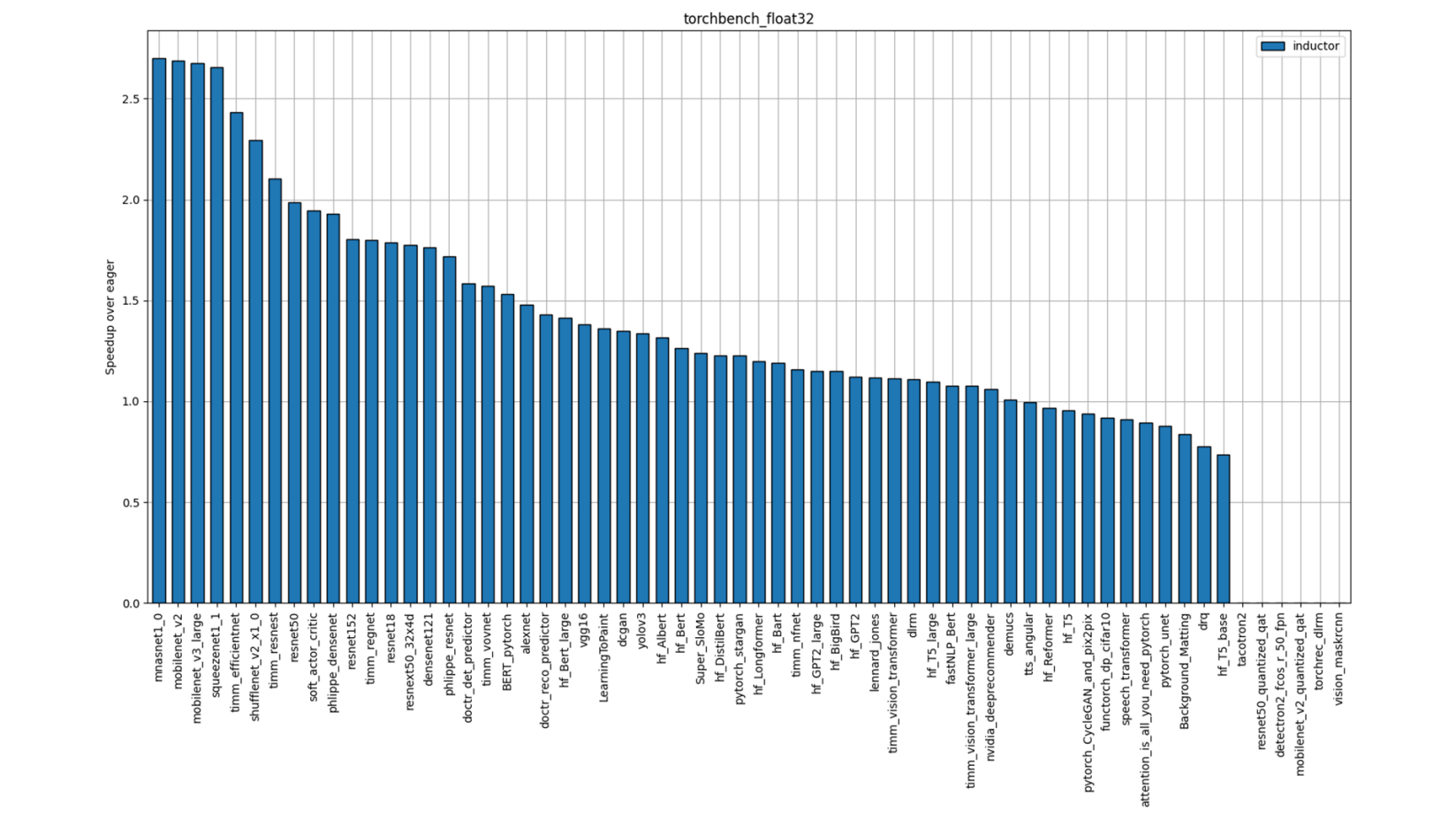

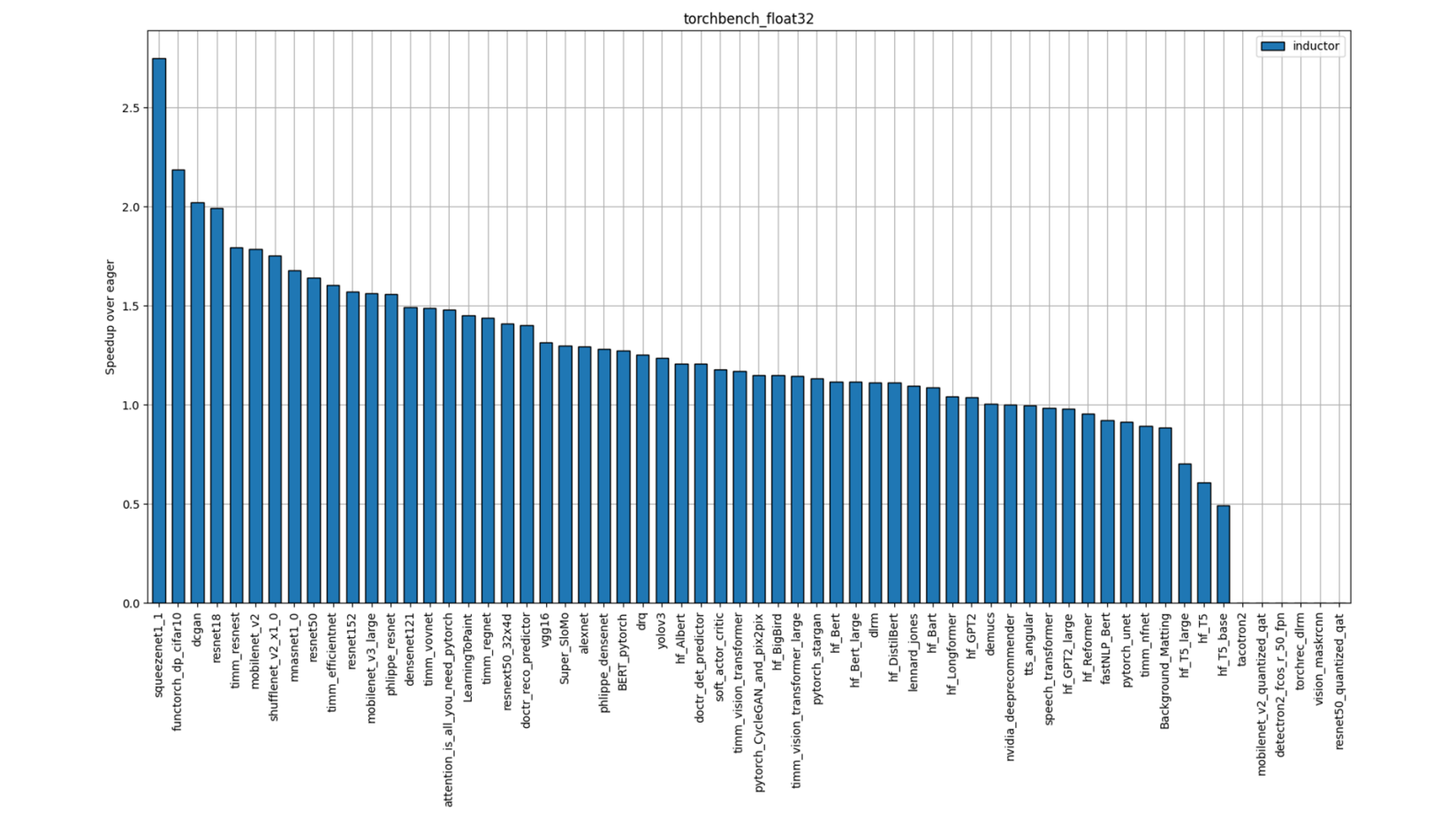

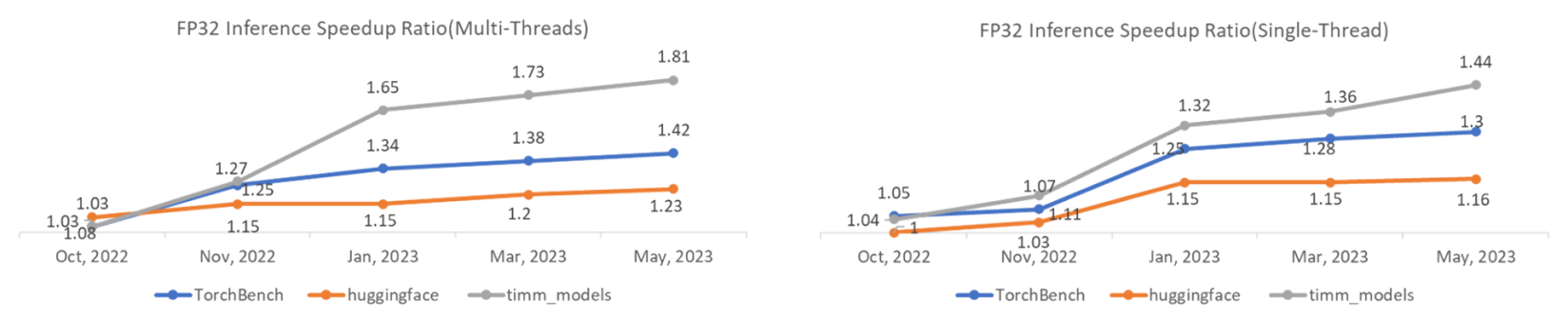

Case study of torch.compile / cpp inductor on CPU: min_sum / mul_sum with 1d / matmul-like with static / dynamic shapes · Issue #106614 · pytorch/pytorch · GitHub

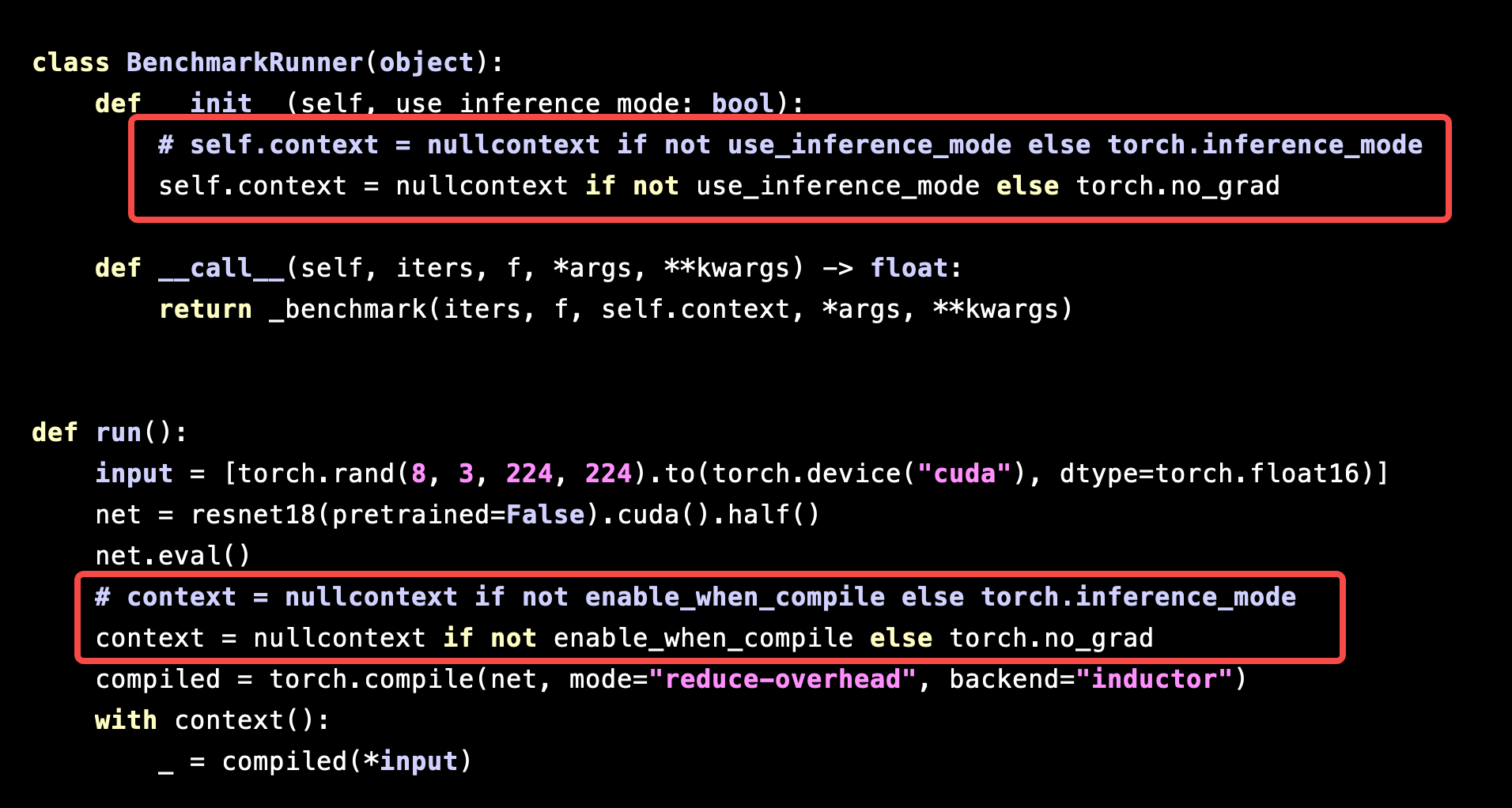

Performance of `torch.compile` is significantly slowed down under `torch.inference_mode` - torch.compile - PyTorch Forums

TorchInductor: a PyTorch-native Compiler with Define-by-Run IR and Symbolic Shapes - compiler - PyTorch Dev Discussions

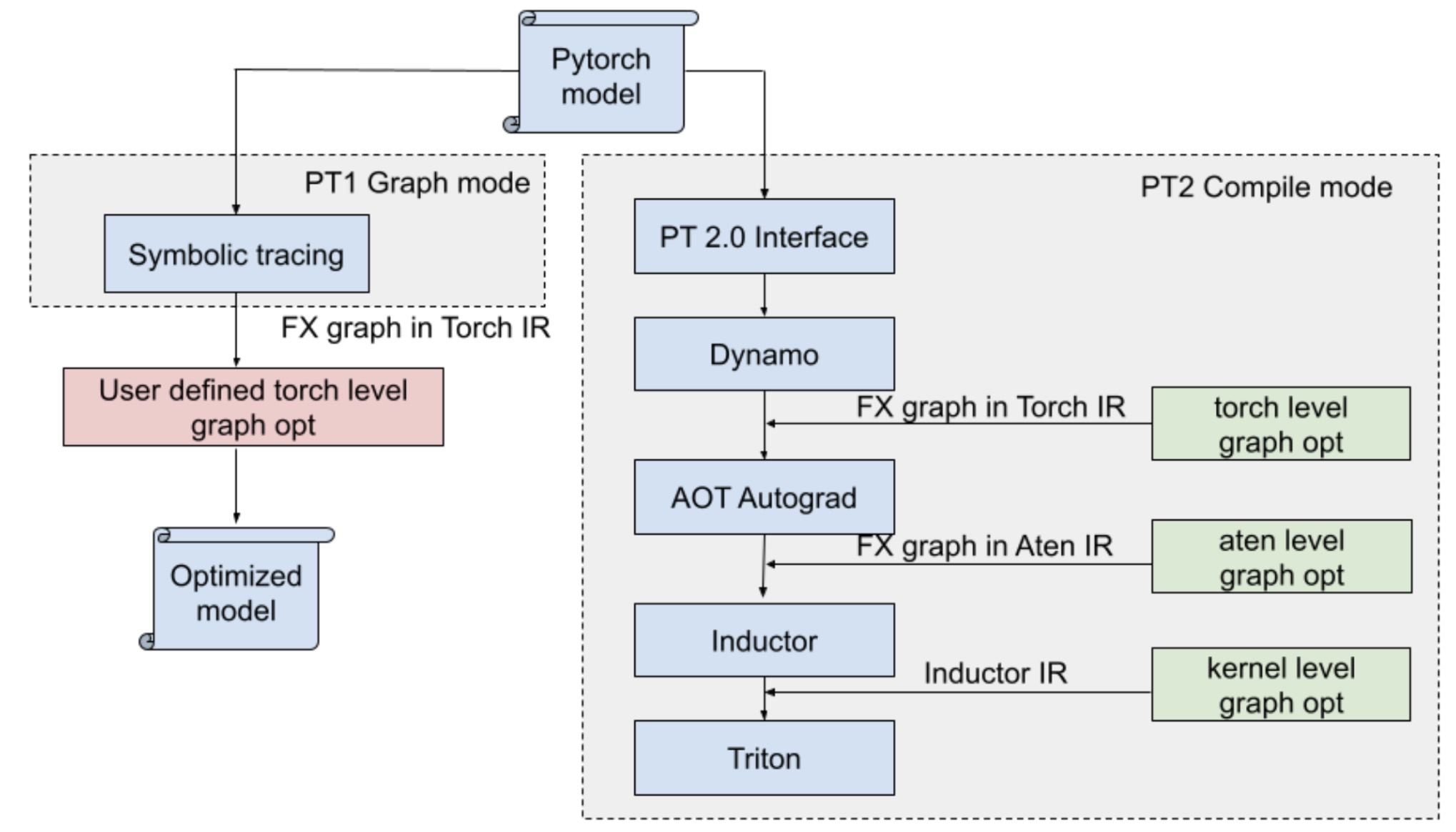

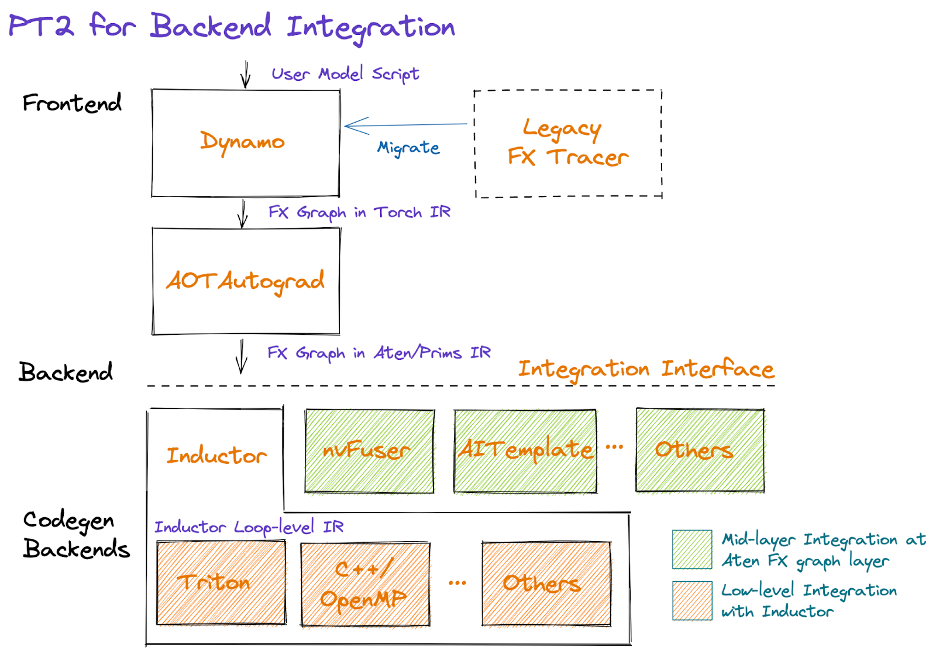

PyTorch 2.0 Ask the Engineers Q&A Series: Deep Dive into TorchInductor and PT2 Backend Integration - YouTube

How Pytorch 2.0 Accelerates Deep Learning with Operator Fusion and CPU/GPU Code-Generation | by Shashank Prasanna | Towards Data Science